Instructions to use wisdomik/Quilt-Llava-v1.5-7b with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Transformers

How to use wisdomik/Quilt-Llava-v1.5-7b with Transformers:

# Use a pipeline as a high-level helper from transformers import pipeline pipe = pipeline("text-generation", model="wisdomik/Quilt-Llava-v1.5-7b")# Load model directly from transformers import AutoProcessor, AutoModelForCausalLM processor = AutoProcessor.from_pretrained("wisdomik/Quilt-Llava-v1.5-7b") model = AutoModelForCausalLM.from_pretrained("wisdomik/Quilt-Llava-v1.5-7b") - Notebooks

- Google Colab

- Kaggle

- Local Apps

- vLLM

How to use wisdomik/Quilt-Llava-v1.5-7b with vLLM:

Install from pip and serve model

# Install vLLM from pip: pip install vllm # Start the vLLM server: vllm serve "wisdomik/Quilt-Llava-v1.5-7b" # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:8000/v1/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "wisdomik/Quilt-Llava-v1.5-7b", "prompt": "Once upon a time,", "max_tokens": 512, "temperature": 0.5 }'Use Docker

docker model run hf.co/wisdomik/Quilt-Llava-v1.5-7b

- SGLang

How to use wisdomik/Quilt-Llava-v1.5-7b with SGLang:

Install from pip and serve model

# Install SGLang from pip: pip install sglang # Start the SGLang server: python3 -m sglang.launch_server \ --model-path "wisdomik/Quilt-Llava-v1.5-7b" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "wisdomik/Quilt-Llava-v1.5-7b", "prompt": "Once upon a time,", "max_tokens": 512, "temperature": 0.5 }'Use Docker images

docker run --gpus all \ --shm-size 32g \ -p 30000:30000 \ -v ~/.cache/huggingface:/root/.cache/huggingface \ --env "HF_TOKEN=<secret>" \ --ipc=host \ lmsysorg/sglang:latest \ python3 -m sglang.launch_server \ --model-path "wisdomik/Quilt-Llava-v1.5-7b" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "wisdomik/Quilt-Llava-v1.5-7b", "prompt": "Once upon a time,", "max_tokens": 512, "temperature": 0.5 }' - Docker Model Runner

How to use wisdomik/Quilt-Llava-v1.5-7b with Docker Model Runner:

docker model run hf.co/wisdomik/Quilt-Llava-v1.5-7b

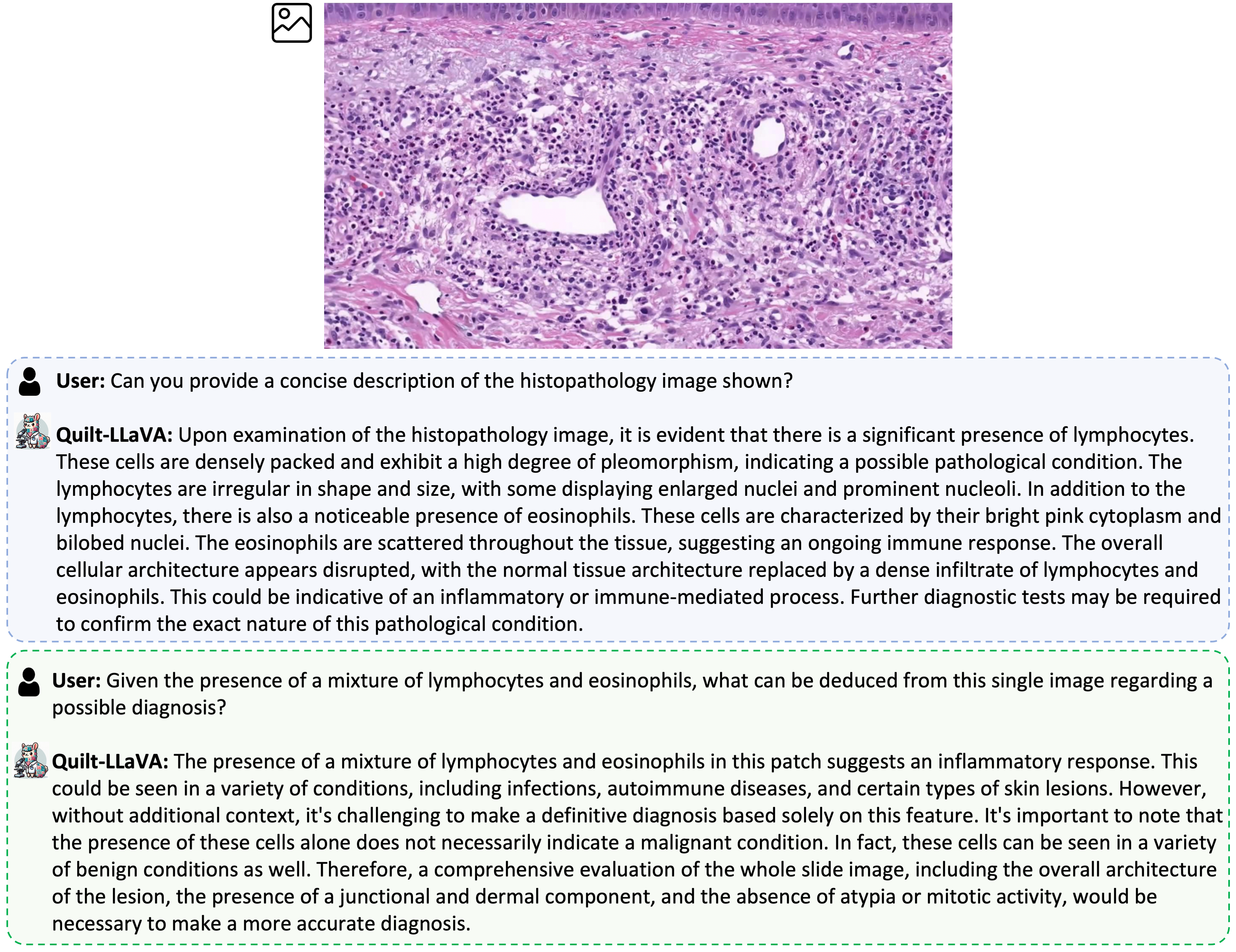

Quilt-LlaVA Model Card

Model details

Model type: Quilt-LLaVA is an open-source chatbot trained by fine-tuning LLaMA/Vicuna on histopathology educational video sourced images and GPT-generated multimodal instruction-following data. It is an auto-regressive language model, based on the transformer architecture.

Citation

@article{seyfioglu2023quilt,

title={Quilt-LLaVA: Visual Instruction Tuning by Extracting Localized Narratives from Open-Source Histopathology Videos},

author={Seyfioglu, Mehmet Saygin and Ikezogwo, Wisdom O and Ghezloo, Fatemeh and Krishna, Ranjay and Shapiro, Linda},

journal={arXiv preprint arXiv:2312.04746},

year={2023}

}

Model date: Quilt-LlaVA-v1.5-7B was trained in November 2023.

Paper or resources for more information: https://quilt-llava.github.io/

License

Llama 2 is licensed under the LLAMA 2 Community License, Copyright (c) Meta Platforms, Inc. All Rights Reserved.

Where to send questions or comments about the model: https://github.com/quilt-llava/quilt-llava.github.io/issues

Intended use

Primary intended uses: The primary use of Quilt-LlaVA is research on medical large multimodal models and chatbots.

Primary intended users: The primary intended users of these models are AI researchers.

We primarily imagine the model will be used by researchers to better understand the robustness, generalization, and other capabilities, biases, and constraints of large vision-language generative histopathology models.

Training dataset

- 723K filtered image-text pairs from QUILT-1M https://quilt1m.github.io/.

- 107K GPT-generated multimodal instruction-following data from QUILT-Instruct https://huggingface.co/datasets/wisdomik/QUILT-LLaVA-Instruct-107K.

Evaluation dataset

A collection of 4 academic VQA histopathology benchmarks

- Downloads last month

- 1,887